All the misconceptions about Linear Regression

not for the faint-hearted

Linear regression is a widely used statistical technique, but several misconceptions about it persist. Here are some common ones:

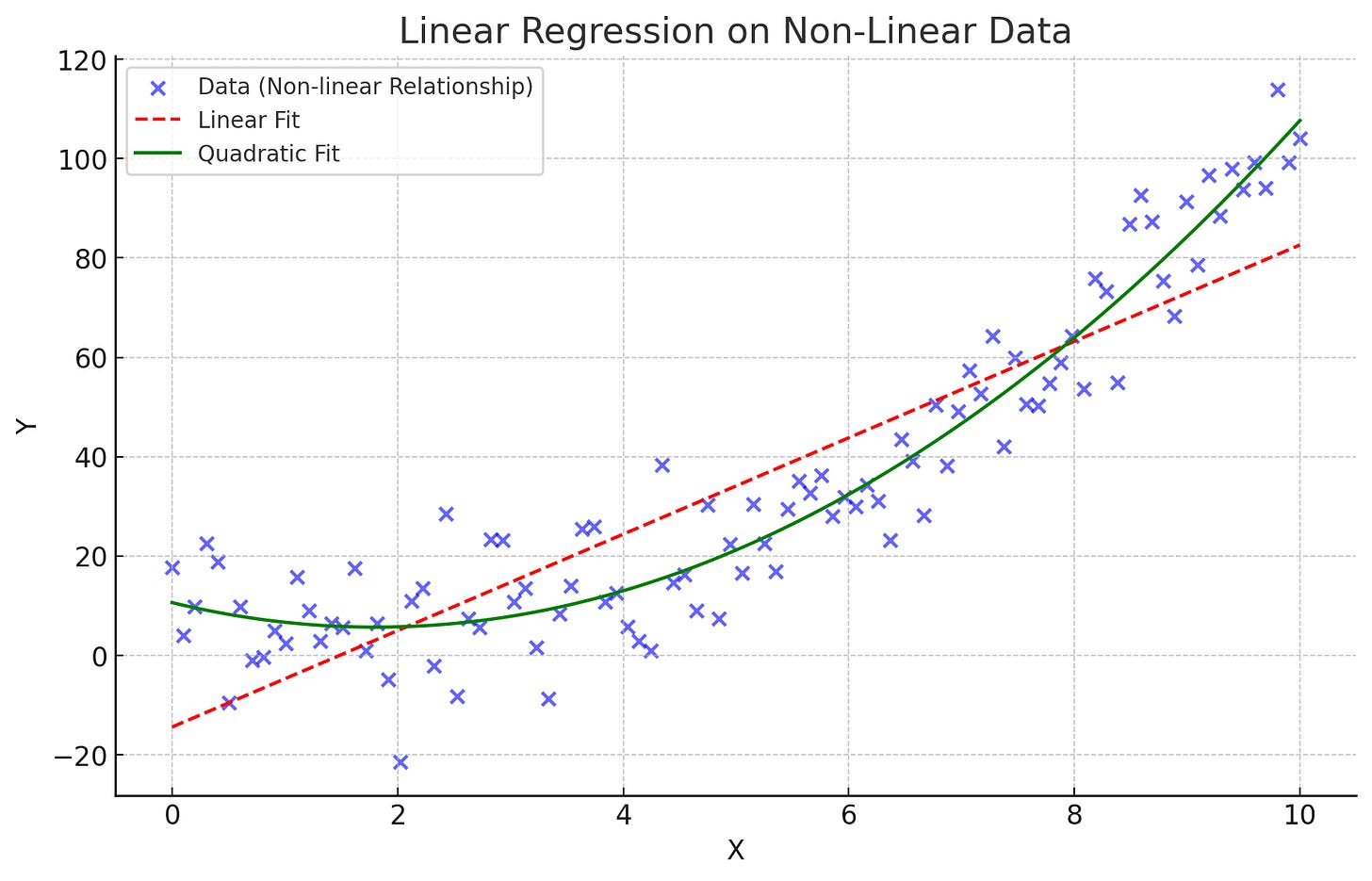

1. Linear Relationships Only:

- Misconception: Linear regression can only be used when the relationship between the variables is linear.

- Reality: Linear regression can handle non-linear relationships by transforming the predictor variables (e.g., polynomial terms, logarithmic transformations). Predicting the growth rate of a bacterial culture over time may not be linear. By including a quadratic term (time²) in the regression model, the non-linear relationship can be captured more accurately. In many professions, income increases rapidly early in a career, levels off, and might even decrease as retirement approaches. A quadratic regression model (including age and age² as predictors) can better capture this non-linear relationship than a simple linear model.

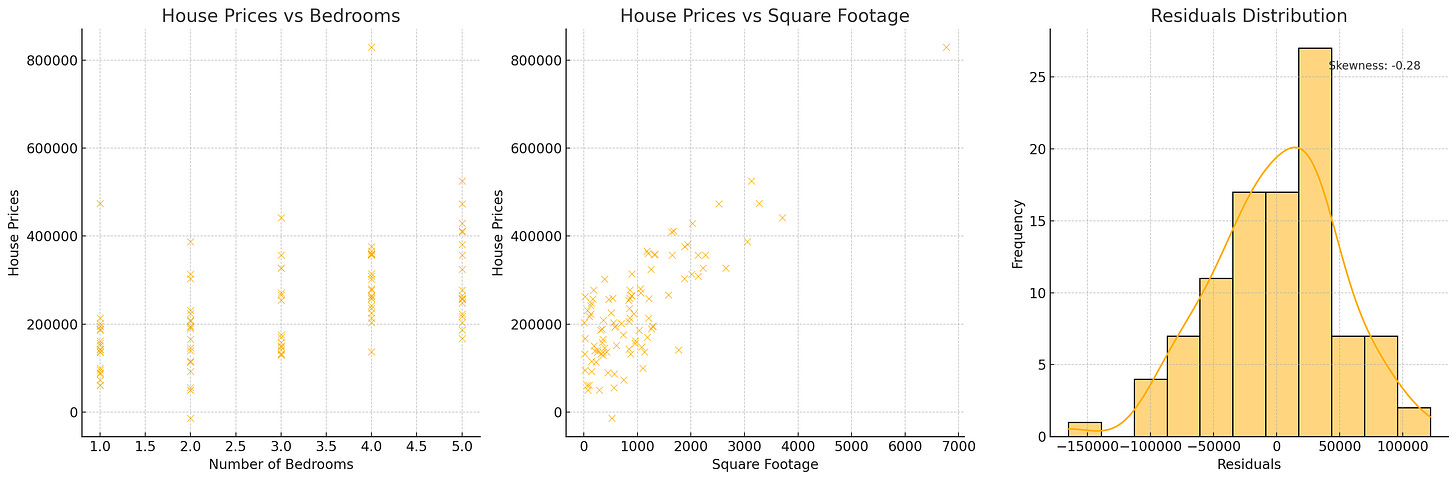

2. Normality of Predictors:

- Misconception: The predictor variables need to be normally distributed.

- Reality: Linear regression does not require the predictors to be normally distributed; it only requires the residuals to be approximately normally distributed for inference purposes (e.g., confidence intervals, hypothesis tests). Predicting house prices using variables like the number of bedrooms and square footage. Similarly, when modeling weights based on height and age, heights are often normally distributed, but ages might not be, especially if the sample is taken from a specific age group (e.g., children). These predictors are often not normally distributed, but the regression model can still be valid as long as the residuals are approximately normally distributed.

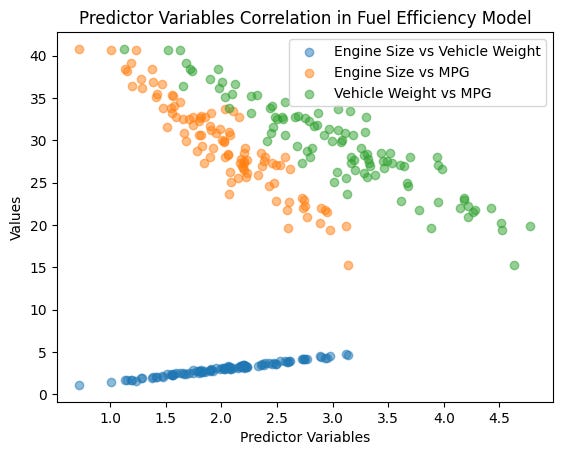

3. Independence of Predictors:

- Misconception: The predictor variables must be independent of each other.

- Reality: Predictor variables can be correlated. However, high multicollinearity can make it difficult to determine the individual effect of each predictor and can inflate the variance of the coefficient estimates. In a model predicting car fuel efficiency (MPG), the predictors engine size and vehicle weight are likely to be correlated. The model can still be valid, though multicollinearity might affect the precision of the coefficient estimates. The number of rooms and total square footage are likely correlated because larger houses tend to have more rooms. This multicollinearity doesn’t invalidate the model but might make interpreting individual coefficients more challenging.

4. Causation:

- Misconception: A significant relationship in a linear regression implies causation.

- Reality: Linear regression shows association, not causation. Establishing causation requires further investigation, including experimental design or controlling for confounding variables. Finding that increased social media usage is associated with lower academic performance does not mean social media causes poor grades. Other factors, such as stress or lack of sleep, could influence both variables.

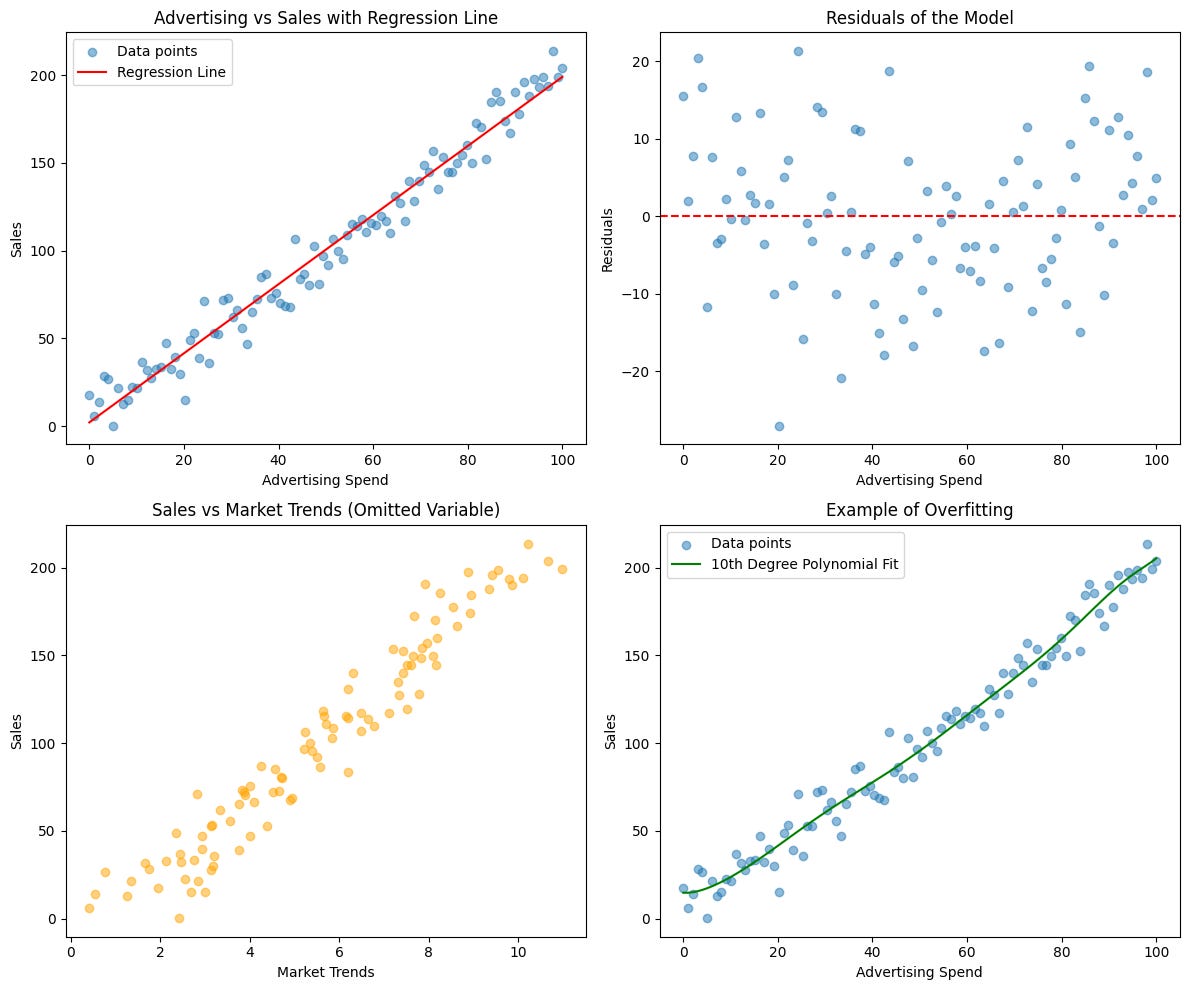

Perfect Model Fit:

- Misconception: A good model fit (high R^2) means the model is perfect.

- Reality: A high R^2 does not mean the model is perfect. It indicates the proportion of variance explained by the model, but other factors such as the distribution of residuals, omitted variables, and overfitting should also be considered. A model might show a high 𝑅^2, indicating a strong relationship between advertising and sales. However, it might ignore other critical factors like market trends, competition, and economic conditions, suggesting the model is not perfect.

6. Non-zero Slope Significance:

- Misconception: If the slope of the regression line is non-zero, the predictor is useful.

- Reality: A non-zero slope indicates a relationship, but statistical significance (usually via p-values) is necessary to determine if this relationship is unlikely to be due to random chance. A model predicting employee performance based on coffee consumption might show a non-zero slope, but if the p-value is high (p > 0.05), this relationship might not be statistically significant, indicating the effect could be due to random chance.

7. All Assumptions Must Be Strictly Met:

- Misconception: All the assumptions of linear regression (linearity, independence, homoscedasticity, normality of residuals) must be strictly met for the model to be useful.

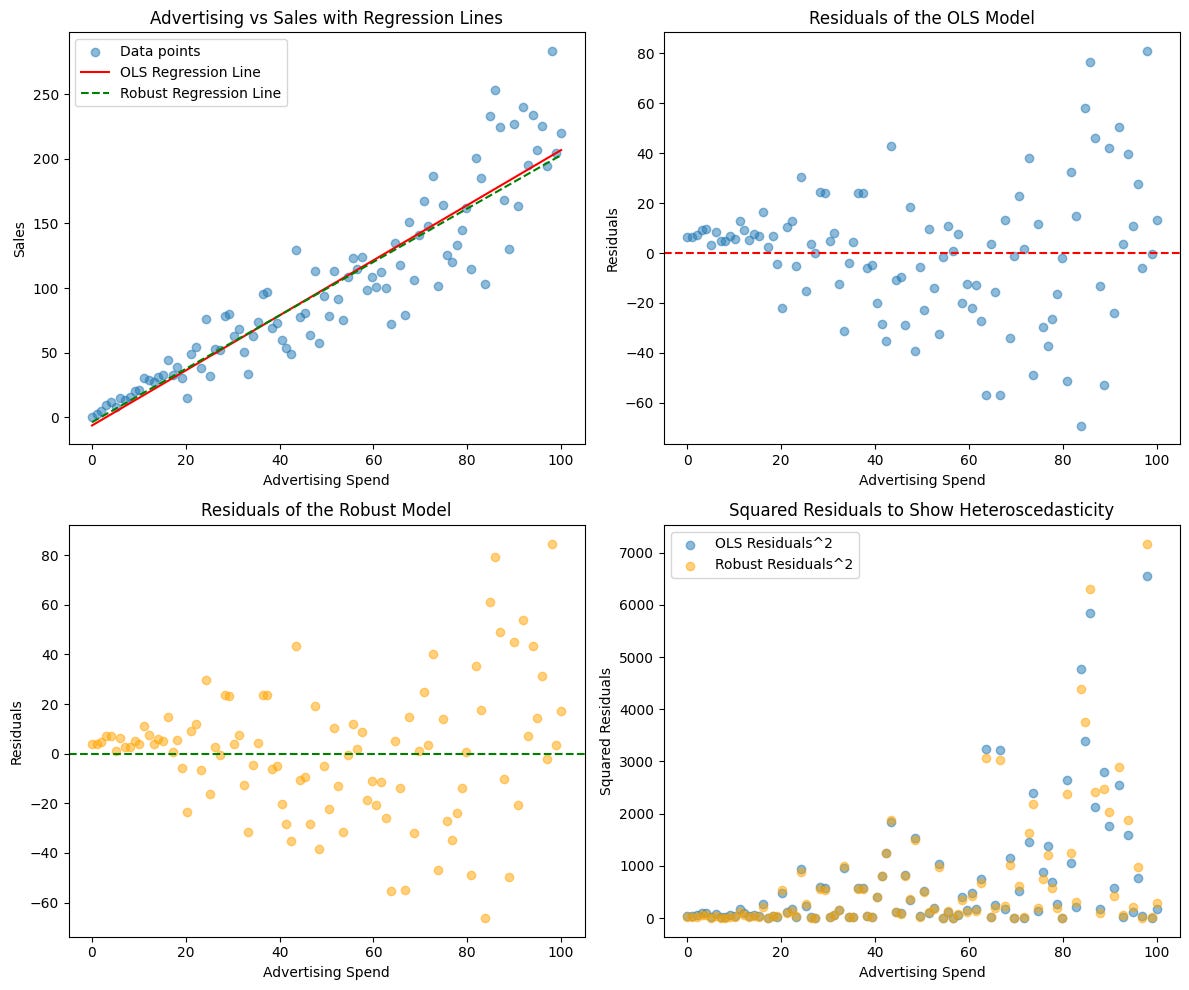

- Reality: Violations of assumptions can affect the results, but in practice, minor violations may not severely impact the model’s usefulness. Robust regression techniques and diagnostics can help mitigate issues. Predicting sales based on advertising spend might have some heteroscedasticity (changing variance of residuals). While not ideal, the model can still provide useful insights, especially if robust standard errors are used.

8. Extrapolation Reliability:

- Misconception: Linear regression can be reliably used for extrapolation beyond the range of the data.

- Reality: Extrapolation can be highly unreliable as the relationship outside the observed data range might not follow the same pattern. Using a linear model to predict future stock prices based on past performance can be very unreliable. Stock prices are influenced by many factors not captured in historical trends, and the relationship may change over time.

9. Single Method Sufficiency:

- Misconception: Linear regression is sufficient for all types of data analysis problems.

- Reality: Different problems require different methods. Linear regression is one tool among many (e.g., logistic regression for binary outcomes, time series analysis for temporal data). Predicting whether a patient has a disease based on medical test results requires logistic regression (for binary outcomes) rather than linear regression, which is better suited for continuous outcomes.

Understanding these misconceptions helps in appropriately applying linear regression and interpreting its results more accurately.